Imagine you're in a laboratory, surrounded by the hum of machines, the glow of screens filled with data, and the weight of uncertainty. You're conducting an experiment where the results seem elusive. No matter how carefully you set up, the data refuses to align, and each trial only brings you closer to a question rather than an answer. The only way to get reliable data is by repeating the test multiple times. Quantum computing works similarly. So, just like in any other experiment where repeated trials yield the clearest picture, quantum systems rely on repetitions to reach the truth.

But why is initialization crucial? Well, think of it like this: without a stable starting point for each trial, every subsequent experiment is like walking blindfolded into unknown territory. That's why resetting to the initial state is a crucial step - it doesn’t fix errors, but it ensures that every repetition starts from a known, stable state, increasing the chances of obtaining accurate results. It’s the nature of quantum systems, not a malfunction.

In this blog post, we'll explore the state-of-the-art initialization method, namely the active reset, and why active reset stands out as a solution that reduces experimental time, boosts initialization performance, and enables practical experimental timescales for quantum error correction codes.

More than just an initial step

Getting a qubit to a known starting point - usually the ground state - is a critical step in running any quantum algorithm. How efficiently this is done directly impacts the algorithm’s overall performance. Without this, controlling its state becomes meaningless, leading to unusable results. That’s why proper initialization is one of the fundamental DiVincenzo criteria for quantum computing (DiVincenzo, 2000). Initialization is equally important for error correction.

The challenge of passive reset

One traditional way to reset a qubit is through passive initialization, a method where the qubit naturally returns to its ground state over time. Superconducting qubits are quantum systems whose states are encoded in the energy levels of a superconducting circuit. On average, these circuits can naturally decay to their ground state over time, as thermal fluctuations in a cold environment are insufficient to excite the qubit. It might seem like a simple solution, but the passive reset has its drawbacks.

Here’s the problem: as qubit lifetimes improve, this passive approach introduces impractically long dead time in the experiment. Passive reset is achieved by inserting wait times of typically at least five to seven times the qubit's lifetime (T1). For transmons, with an average lifetime of 10 to 100 microseconds, the absolute minimum introduced waiting time per reset already adds 50 to 500 microseconds. As the field works to increase qubit coherence times (T1), passive reset becomes even more impractical. The best devices now exceed 300 microseconds in T1, resulting in >1.5 milliseconds per reset! If we manage to create qubits with 5ms lifetimes, the passive reset time would be 5 to 7 times that, making active reset a necessity. Secondly, residual qubit excitations can produce initialization errors.

Another issue is the stochastic nature of passive resets - sometimes the qubit doesn’t fully return to its ground state, leading to errors in the next experiment. As quantum systems scale up and we are implementing error correction, this passive method will struggle to keep pace with the speed needed to effectively run complex quantum algorithms.

Active reset

Unlike passive initialization, active reset strategies apply a π-pulse to actively force the qubit to its ground state. It’s a quick, intentional approach to reset the qubit, reducing the overall experiment time and enhancing initialization fidelity, leading to more reliable quantum algorithms. Active reset can be performed unconditionally or conditionally, here we focus on the latter.

Conditional active reset is incredibly fast, with systems like Qblox Cluster achieving a deterministic, high-throughput feedback loop under 650 ns. In practical terms, this means that the reset process can be sped up by a factor of 125 to 1250 times compared to passive methods. Even for qubits with shorter lifetimes, this speed-up is tremendous, as visualized below. It’s not just about speed, though - active reset can also improve the fidelity of initialization, meaning that each qubit is more reliably set to its ground state; making the quantum experiments far more accurate and reliable.

.avif)

Active reset with Qblox

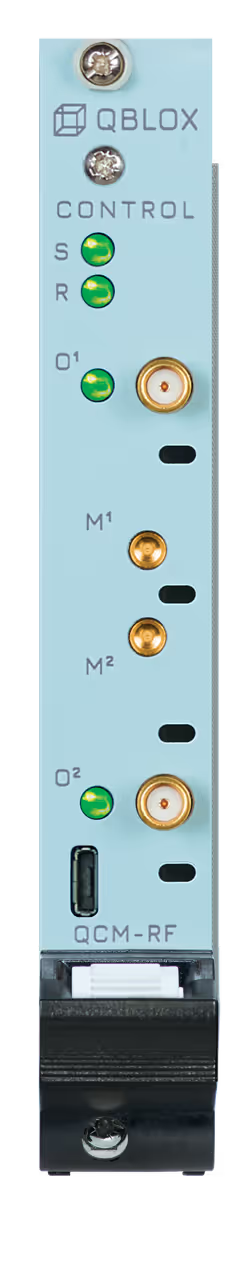

The experimental setup is built around the Qblox Cluster Mainframe. We offer gigahertz readout capabilities through either our Qubit Readout Module RF (QRM-RF) or our Qubit Readout and Control (QRC) module. Qubit control can be achieved using our Qubit Control Module RF (QCM-RF) module or the QRC.

To demonstrate active reset, a readout resonator operating in the GHz range is used. This resonator's frequency is affected by the qubit's state. By measuring the resonator's frequency, a feedback loop is initiated that generates specific waveforms based on the qubit's detected state. Speed is critical, both for initial setup and ongoing operations, because the feedback needs to happen within the qubit's decoherence time to maintain accuracy throughout the quantum computation. Qblox’s setup handles this with ease. Our feedback protocol, LINQ, deterministic, high-throughput feedback with all-to-all connectivity in under 650 ns,, ensuring a reliable qubit reset before decoherence occurs.

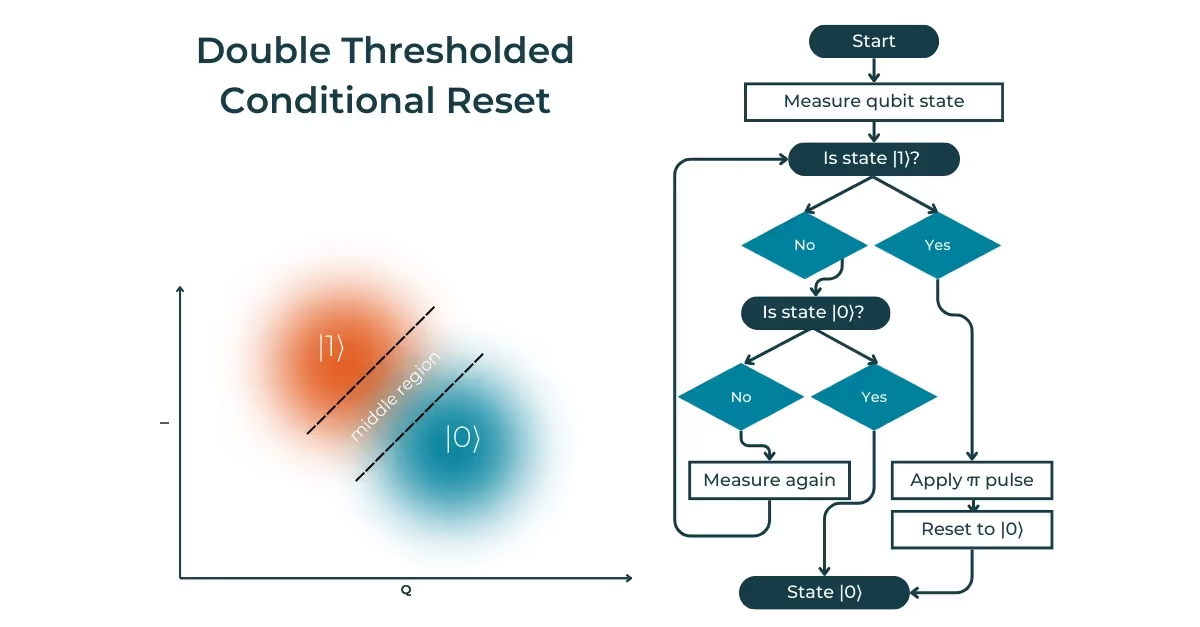

Conditional reset: Single vs. double threshold

A reliable qubit reset requires distinguishing between the qubit’s two possible states. In cases where the two distributions are well-separated, a single threshold can accurately identify the qubit’s state with sufficient fidelity. To establish the threshold, as a calibration step, single-shot readout (SSRO) measurements, which help determine the distributions of the qubit states. The integration time of the SSRO plays a critical role in determining the separation between these distributions.

Using a coplanar waveguide resonator operating in the GHz range, shorting the microwave resonator allows manipulation of its frequency response to fine-tune the reset process.

When two distributions overlap significantly, relying on a single threshold for state discrimination can result in a high rate of mislabeling. To address this, we introduce a second threshold, which divides the data into three regions. Each region is associated with its conditional feedback response. In this setup, if the qubit state is detected within the middle region (between the two thresholds), the system triggers an additional measurement to refine the state determination. This double thresholding technique enhances the reliability of the reset process, improving the fidelity of qubit state identification. It ensures a more precise reset, reducing the likelihood of errors in subsequent quantum operations.

What makes an experimental setup successful for qubit reset?

It boils down to fast and efficient feedback. Achieving this requires FPGA engineering and the use of multiple instruments (qubit controllers, RF signal generators, digitizers, etc.). The technical requirements for an optimal qubit reset setup include ultra-low feedback latencies.

Qblox simplifies this challenge with its integrated control stack electronics solution that is tailored to qubit needs. It is all integrated, easy to use, and addresses these needs with a seamless integration of readout, control, and fast-feedback capabilities. With a cluster-wide feedback loop under 650 ns, the Qblox Cluster provides fast, precise, and scalable quantum control for advanced real-time operations.

Boosting future quantum experiments with active qubit reset

As quantum computing continues to evolve, the importance of efficient qubit reset cannot be overstated. Active reset, with its speed and reliability, is a key solution that will drive the future of quantum algorithms and error correction. When we talk about quantum error correction, we're addressing one of the biggest hurdles in quantum computing. Active reset is going to be instrumental in speeding up quantum error correction experiments, which will, in turn, make quantum computers much more reliable and able to scale up to tackle complex problems.

Researchers are constantly exploring new avenues, looking for different ways to achieve efficient qubit reset. For example, in coupler-coupled qubit architectures, a mixing pulse can transfer qubit excitation to a low-quality coupler and readout resonator, which quickly decays to their ground states, allowing for efficient reset without relying on feedback. While this approach might not alleviate QPU issues through advanced control electronics, it presents an interesting avenue for future research and development. (Marques et al. 2023, McEwen et al. 2021)

What does the future of qubit reset hold? It will undoubtedly involve continued innovation. A reminder that the field of quantum computing is dynamic and evolving and researchers are constantly seeking new ways to improve reset efficiency. One thing is certain: Qblox provides technology that enables fast and scalable feedback, supporting researchers in their efforts to push the boundaries in quantum computing.

Qblox superconducting qubits success story

Fast unconditional reset and leakage reduction in fixed-frequency transmon qubits

At Chalmers University of Technology, researchers used a Qblox control stack, including QCM-RF and QRM-RF modules, to develop a protocol for rapidly resetting fixed-frequency transmon qubits. This protocol achieved a complete reset process in 83 nanoseconds with over 99% fidelity, enhancing quantum error correction and algorithmic performance. The achievement underscores the potential of fixed-frequency transmon qubits in next-generation quantum architectures compatible with the surface code.

Read more about this one and other Qblox success stories here.

.svg)