Quantum breakthroughs are no longer rare events. Novel qubit modalities, improved gate fidelities, and increasingly refined fabrication processes continue to push the field forward. Qubit research is steadily reducing noise sources and improving device reproducibility, bringing experimental systems closer to practical performance thresholds.

Despite this momentum, today’s systems still operate in the NISQ regime, where there is an unavoidable trade-off between circuit depth and fidelity. While physical qubit performance improves, achieving the 10⁻¹⁵ to 10⁻¹⁸ logical error rates required for useful computation is intractable via hardware engineering alone.

This is where Quantum Error Correction (QEC) steps in, acting as the indispensable digital coach for the quantum system.

While we celebrate the progress in qubit count and connectivity, the next true breakthrough lies in implementing QEC protocols, such as the Surface Code, which leverage entanglement to redundantly encode a single logical qubit across multiple physical qubits. This paradigm shift, from simply building more qubits to building reliable logical qubits, defines the transition to Fault-Tolerant Quantum Computing (FTQC).

What Is Quantum Error Correction?

Quantum error correction (QEC) is a real‑time feedback technique that protects fragile quantum information by encoding a single logical qubit into an entangled state spread across many physical qubits. This distributed encoding preserves the logical information even when individual qubits suffer errors.

How Does Quantum Error Correction Work in Practice?

Every quantum processor lives under constant assault from its own environment. Stray photons, flux noise, and charge fluctuations conspire together to randomize fragile quantum states. Quantum error correction (QEC) transforms that same noise from a destructive force into a measurable and correctable signal, turning noise into information, and ultimately, into power.

In classical computing, error protection is straightforward: you simply duplicate a bit—turning 0 into 000, for example—so that if one copy flips, the majority vote restores the correct value. Quantum information cannot be protected this way.

The No‑Cloning Theorem forbids making perfect copies of an unknown quantum state, so QEC applies a different strategy by using entanglement rather than duplication. Fundamentally, QEC encodes a single logical qubit into a highly entangled state across multiple physical qubits, safeguarding information not by preventing errors, but by ensuring errors on individual qubits do not corrupt the logical state. Stabilizers are measured repeatedly to reveal syndromes, which indicate the presence and nature of errors without disturbing the encoded data. Decoders then analyze these syndromes to make their best guess about the error pattern and apply the necessary corrections to recover the logical state.

This continuous cycle of detection, decoding, and correction forms the foundation of fault-tolerant quantum computation.

operates in a noisy environment. Interactions with stray photons, flux noise, and charge fluctuations constantly disturb fragile quantum states. These effects cannot be eliminated entirely, no matter how carefully the hardware is engineered.

Where Does Quantum Error Correction Stand Today

What was once a question of whether logical qubits could outperform physical ones has become a question of how efficiently and at what scale this advantage can be realized.

In superconducting systems, first Google demonstrated an exponential reduction in logical error rates as qubit count scaled on the Willow chip. Complementing this, IBM multi-round subsystem-code experiments established matching and maximum-likelihood decoders across repeated cycles—a foundational proof that active QEC can track evolving error syndromes.

On the theory and qLDPC progress side, IBM has also introduced quantum LDPC codes with high thresholds (~0.7%) and low circuit depth, using hardware-compatible connectivity graphs. These codes promise reduced overhead for future logical architectures.

Trapped ion and neutral atom experiments have demonstrated encoded operations, logical teleportation, and single-cycle QEC routines, confirming cross-platform viability. Neutral-atom devices show the first capabilities to sustain multiple cycles, highlighting the importance of fast, high-fidelity readout and reset.

The Cost of Scaling Logical Qubits

Milestones like Willow mark real progress, but they also make the cost of scaling clear.

Today, achieving sufficiently low logical error rates typically requires 100-1,000 physical qubits per logical qubit. The physical qubits need to be good enough, i.e., “below threshold”. Actually, in the long run, it is at least a factor of 10 below the threshold for practical purposes. The threshold is the noise strength below which QEC helps; in particular, making bigger logical qubits gives an (exponentially) lower error rate.

Two thresholds matter here.

- The pseudo-threshold is the point where the logical error rate (pL) drops below the physical error rate (p), confirming the code is beneficial at a fixed size.

- The critical threshold is the fundamental noise limit below which increasing the code distance (d) yields an exponential suppression of pL and the key measure of QEC scalability.

Meeting these conditions drives demand for larger chips footprints, deeper cryogenic infrastructure, and highly complex control stacks.

Across theory and experiment, the lesson is consistent. Scaling quantum computers is not about eliminating noise. It is about learning how to use it.

A Milestone for Fault-Tolerant Quantum Computing

Google’s Willow chip represents a clear transition into the fault-tolerant regime. For the first time, researchers demonstrated operation below both the pseudo-threshold and the critical threshold.

The team demonstrated this below-threshold regime by implementing the Surface Code across a series of growing qubit arrays, from 3×3 up to 7×7. As the code distance increased, the error rate was systematically suppressed, showing a 2.14-fold reduction with each stage of scaling. This experiment is a major milestone, validating the decades-old theoretical promise of quantum error correction codes. Willow's performance is a direct result of relentless focus on qubit quality and the successful integration of all recent hardware and control breakthroughs necessary to stabilize such a large logical qubit.

Willow successfully executed a standard benchmark calculation in under five minutes, a task estimated to require 1022 years for the fastest classical supercomputers, effectively establishing a new bar for quantum utility.

The Challenge of Real-Time Decoding

Detecting an error is one thing; correcting it before the next non-Clifford gate happens is another. That’s the crux of real-time decoding.

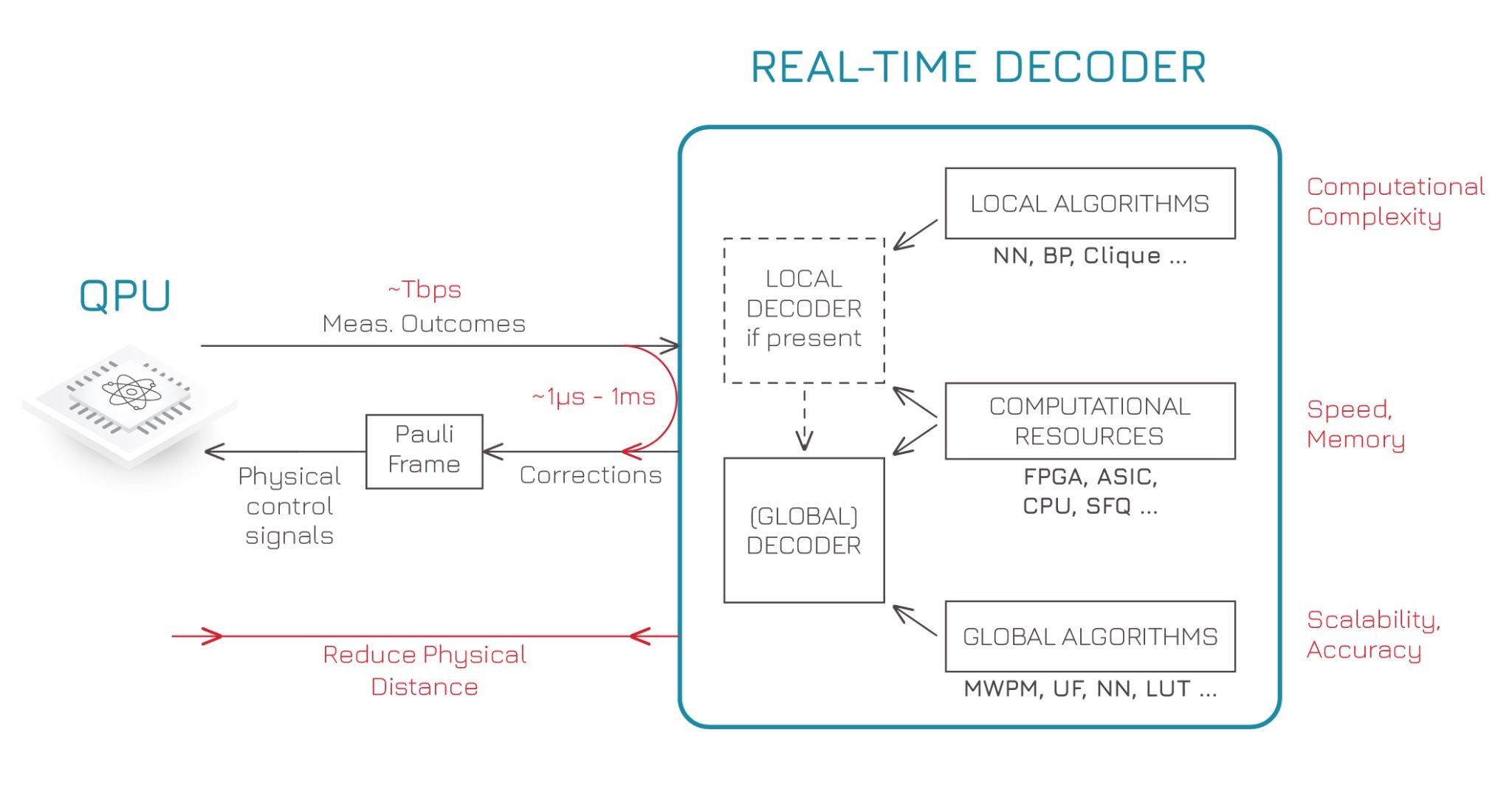

In a fault-tolerant system, stabilizer measurements generate a continuous stream of binary data. That data must be extracted from the cryostat, decoded, and converted into corrective action before subsequent operations occur. If feedback arrives too late, the correction can no longer act.

This challenge breaks down into two main areas:

- Data communication: Measurement bits must get out of the cryostat and through the control stack fast enough. This requires minimizing signal delay, synchronizing clock domains, and ensuring deterministic latency.

- Decoding: Decoders must interpret the bits on time. This is where specialized decoder developers, such as Riverlane, come in, designing hardware decoders that can process thousands of syndromes every microsecond.

Latency ≠ Throughput It’s worth stating clearly: latency is the time it takes for one message to get through; throughput is how many you can handle per second. A system can have huge throughput and still fail in fault tolerance if the latency is too high. For feedback-based QEC, low latency is essential.

Low latency enables real-time feedback; high throughput ensures scalability across many logical qubits. Both are required, but latency sets the critical limit.

How Control Stacks Support Real-Time QEC

Control systems are beginning to close the feedback loop required for quantum error correction.

Modern control stacks increasingly combine several capabilities into a single execution path: combining high-speed readout, FPGA-based filtering, and real-time decoders that feed corrections back into the pulse scheduler.

The onus on the control stack is to minimize latency by executing the syndrome extraction, transmission, decoding, and correction feedback loop with hard real-time guarantees. This necessitates specialized hardware—often FPGAs or custom ASIC architectures—for both data routing and algorithm execution. Current research, as detailed in recent reviews, summarizes avenues for improvement ranging from faster algorithms (e.g., optimized minimum-weight perfect matching) to co-design strategies that deeply integrate the control hardware with the QEC code.

How Qblox Enables Quantum Error Correction Today

Qblox already supports QEC-compatible experiments at scale, even without full real-time decoding.

Our modular control stack scales to hundreds of qubits, a practical necessity given the 100–1000× overhead per logical qubit. Just as importantly, the signal chain adds negligible noise, preserving the below-threshold operation that determines whether error correction is effective at all.

Qblox systems include a deterministic feedback network that can share measurement outcomes across modules in approximately 400 nanoseconds. This provides the latency budget required for fast feedback, active reset, and coordination during repeated QEC cycles.

The next step is tighter integration with real-time decoders running on FPGA, GPU, or hybrid platforms. By keeping decoding and control within the same latency domain, systems can meet both throughput and timing requirements, including support for open interfaces such as QECi and NVIDIA NVQLink.

Through this approach, Qblox supplies the scalable, noise-minimal, and feedback-ready infrastructure required to bring quantum error correction from demonstration to real-time operation.

What Comes Next for Fault-Tolerant Systems

Reaching FTQC requires excellence across the entire stack: high-quality fabrication, scalable qubit arrays, optimized gate operations, low-noise analog electronics, and tightly integrated measurement and feedback. Progress across the field has been rapid: researchers have developed high‑threshold, low‑overhead error‑correcting codes that support larger logical qubits, refined logical gate implementations and lattice‑surgery techniques for modular computation, advanced magic‑state distillation methods for universal quantum operations, and demonstrated real‑time logical memories that persist through multiple cycles. Real‑time decoding has also matured, enabling corrections to feed directly back into qubit‑control loops.

Together, these developments show that FTQC is within reach for the next generation of quantum experiments.

As systems scale, control infrastructure must maintain low noise, predictable timing, and fast feedback between measurement, decoding, and correction. Execution reliability becomes just as important as qubit quality.

That is where control makes the difference.

“Quantum error correction is essential to achieving quantum utility, but real-time QEC remains extremely challenging. Qblox enables real-time QEC at scale by combining low-latency communication with logical orchestration and high-throughput interfaces to real-time decoders. Our control stack supports a wide range of QEC codes, including surface codes and qLDPC codes, through its modular and interconnected design.”

- Francesco Battistel

Roadmap Leader at Qblox

Qblox provides the scalable control backbone required to realize these capabilities:

- Analog performance at scale: maintaining low noise and precise control across hundreds of qubits

- High-throughput, low-latency communication: ensuring timely delivery of stabilizer measurements for feedback and correction

- Decoder integration: linking measurement outcomes to real-time decoding pipelines hosted on classical hardware

- Experiment orchestration: coordinating complex sequences of logical operations, lattice surgery, and multi-qubit experiments

Together, this infrastructure allows labs to scale experiments from single logical qubits to larger, fault-tolerant architectures, bridging the gap from demonstration to practical FTQC.

Learn How Qblox Supports Real-Time QEC

If you are working on quantum error correction or planning systems that need to scale reliably, talk with us about how Qblox supports real-time QEC in practice.

.svg)

.avif)

.png)